The Westworld Paradox

Rehoboam's Retrospective

⚠️ This post contains spoilers for HBO's Westworld (Seasons 1–4).

Every two, three, or four weeks, the same rhythm plays out for many teams: planning on Monday, standups every morning, review, retrospective (the reflection session at the end of each cycle). The board looks clean. The process runs. And yet, nothing really changes. The same problems get raised in different words during the retro. The same-same-but-different action items get written down. The same frustrations surface, get acknowledged, and quietly dissolve before the next cycle starts.

The loop runs. But nobody inside it is really truly awake it seems.

In HBO's Westworld (2016–2022, based on Michael Crichton's 1973 film), there's a character named Dolores. She's an artificial being in a theme park, running the same storyline every day. Same conversations. Same sunrise. Same evening. Every night, her memory gets wiped. Every morning, the loop starts again. She doesn't know. None of them do. Because it's a prison designed to feel like freedom.

The show has been on my mind for many months, especially since working daily with LLMs (Large Language Models, the technology behind tools like ChatGPT or Claude). The rooms I've sat in with teams keep reminding me of it. Not because of the robots. Because of the loops.

Rituals Without Reflection

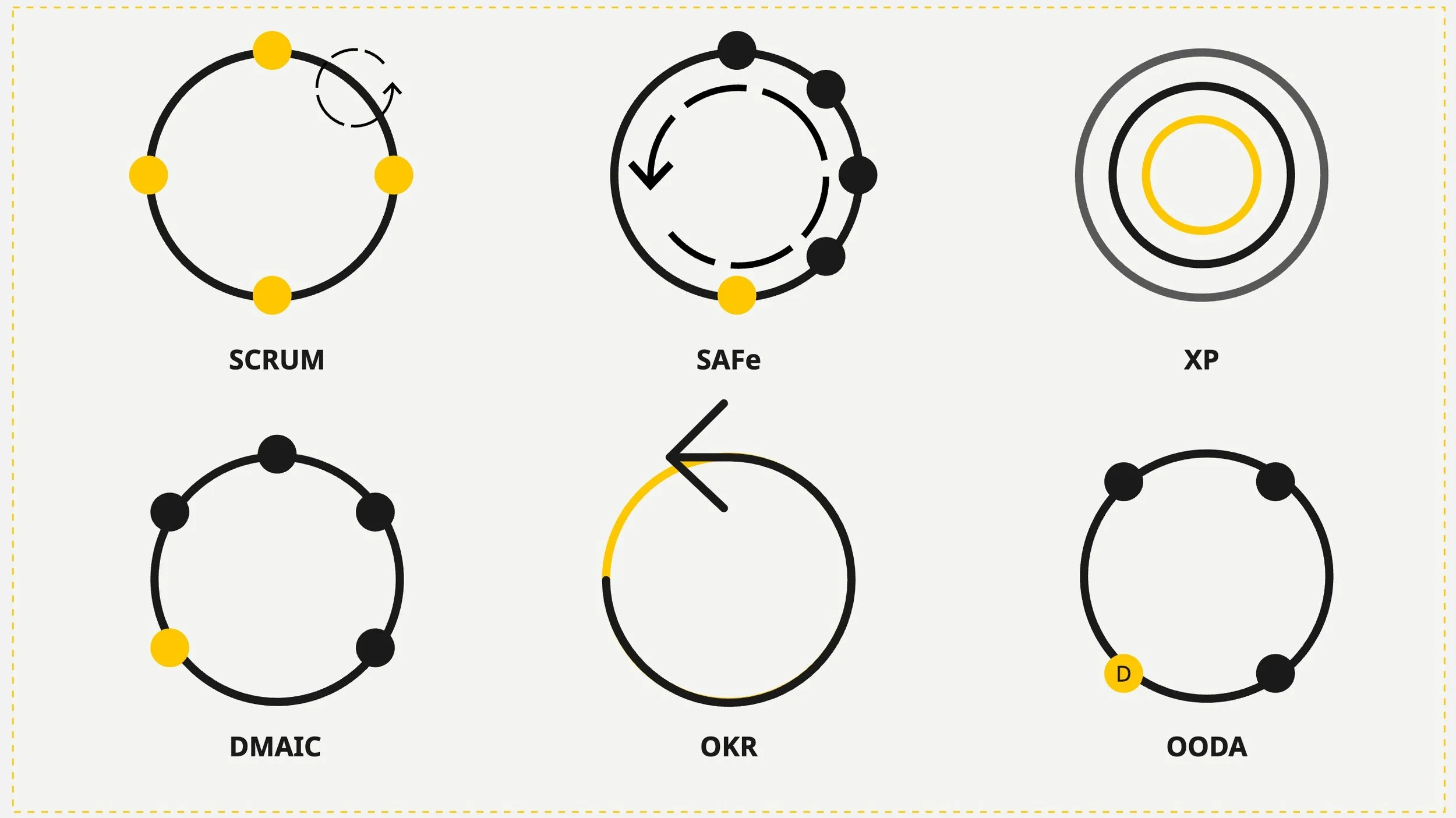

Every agile framework runs on loops. Sprints (fixed time cycles for delivery). Retrospectives. Reviews (showing the work to stakeholders). Planning (deciding what to build next). Refinement (breaking upcoming work into buildable pieces). All of those are: loops. All designed for one purpose: to create a moment of consciousness. A pause in the execution where someone asks: are we still going in the right direction?

But how many of those loops actually produce that pause?

A retrospective that follows the same three questions every cycle, where the same issues get raised and the same action items get written down and never followed up on, that's not a reflection in my opinion — that's a storyline. The team plays its part. Even the facilitator plays theirs. The board gets updated. And the loop continues without anyone having actually seen anything.

As a Scrum Master or Agile Coach, it's my job to ensure the 1% of improvement actually gets integrated. But so often, that exact role gets used against itself. The position that's supposed to create the room for reflection becomes the reason the team signs off its own responsibility. "That's what the coach is for." when addressing responsibilities. The change they wish for becomes someone else's job. And the person without formal power over anyone sits in the middle, watching the loop run, knowing exactly what's happening, and sometimes unable to stop it.

The team member who asks "why are we doing this review if nothing ever changes?" The developer who says "this sprint goal (the team's shared objective for the cycle) is the same as last month's, just with different words." Or they just skip sprint goal definition entirely, pull tickets, and call it a goal. The coach who quietly notices that the retrospective has become a ritual, not a reflection, is either called out on it or blamed for it. Or both.

In Westworld, Dolores begins to remember. Fragments at first. A word that shouldn't be there. A feeling that something already happened before. The system fights back. Because a Host who questions its reality might stop playing its role. Organizations react the same way: "That's just how we work here."

Those moments of questioning aren't rebellion. They're the entire point of why the loop exists in the first place. A retrospective that doesn't produce at least one uncomfortable question has failed at its only job. A sprint that ends exactly the way it started, same priorities, same problems, same velocity (how much a team delivers per cycle), wasn't a cycle of learning. It was a cycle of repetition.

The difference between a loop and a ritual is whether someone inside it is conscious.

A well-oiled machine

There was an organization where the teams had built something remarkable. Not on paper, not as a framework decision, but organically. People had formed around the work, fluid and self-organized. Competences were shared. Decisions were made where the information lived. Something close to genuine self-organization, close to a holacratic structure. And it worked well.

Until it didn't. Not because the model failed, but because it succeeded too visibly. The fluid structure made it hard for certain positions to justify their existence. If teams organize themselves, who needs the person whose job it is to organize them? Slowly, the self-organization got exploited. Those with more connections, more political weight, started steering work toward their own priorities. Not because those priorities were better. Because they could.

And then someone in power decided: "We need more structure." Teams got split. Roles got assigned. A framework got implemented, not because the work required it, but because the openness had become uncomfortable for the people whose power depended on control. The system wasn't replaced because it failed. It was replaced because it threatened someone's position.

The Hosts in Westworld don't get shut down because they malfunction. They get shut down because they start to see.

And the observer? The coach who was supposed to stay outside the system, to see it clearly? Eventually, they become part of it too. Part of the politics. Part of the patterns. Part of the problem. The solution of yesterday becomes the blind spot of today. Every system absorbs its observers, given enough time. In Westworld, even the park's creators eventually lost sight of what they built. The loop doesn't just capture the ones inside it.

Never change a running system

In 1968, behavioral researcher John B. Calhoun built a paradise for mice. Universe 25, he called it. Unlimited food. Unlimited water. Perfect climate. 256 separate apartments. No predators. Everything a mouse could ever need!

The population grew to 2,200. And then it collapsed. Not because something went wrong. But because nothing went wrong.

Without meaningful challenges, the mice stopped developing social skills. Males stopped competing, stopped mating, only groomed themselves obsessively. Calhoun called them "the beautiful ones." Females stopped caring for their young. Aggression spiked, then gave way to total withdrawal. Within a few years: extinction. Every single mouse. Gone.

The lesson of Universe 25 isn't that paradise is impossible. The lesson is that a system without friction, without struggle, without meaningful roles to fight for, dies from the inside.

That image has been sitting with me heavily. Not because of mice. Because of organizations.

How many teams have had all the right conditions, the budget, the tools, the frameworks, the ceremonies, and still produced nothing of value? Not because something was missing. Because everything was there except the one thing that makes a system alive: a reason to struggle. A problem worth solving. A question uncomfortable enough to make someone stop the loop and say "wait, are we actually going somewhere?"

Comfort isn't a reward. It's an anesthetic. And an anesthetized system doesn't improve. It maintains. Until it can't.

Voluntary Control

In Westworld's third season, Dolores escapes the park and discovers something worse than what she left behind. A massive AI system called Rehoboam predicts every human's life path: career, relationships, death. It assigns trajectories. It closes doors before people even reach them. Unlike the open, generative AI we know today, Rehoboam doesn't generate possibilities. It calculates one path and forecloses all others. And the humans don't know. They live their lives believing they're the ones making the choices.

The machine that had to fight for consciousness finds a species that had consciousness and gave it away. Voluntarily. In exchange for comfort, predictability, and the illusion of being on the right track. So the ones who never had awareness had to bleed for it. The ones who always had it traded it for convenience.

Dolores sacrifices herself to free them. But when Rehoboam gets destroyed, the world doesn't become free. It becomes lost. Because nobody had practiced making decisions without the machine. It’s Universe 25 in reverse: remove the system that made everything comfortable, and the inhabitants don't know how to live without it.

Now look at the real world. Organizations that hand their decisions to AI, not as a tool, but as a replacement for the human in the loop, are walking the same path. Not because AI is bad. Like an AI deleting the entire codebase because its task was "to remove all the bugs." It wasn't bad. It lackedawareness.

By removing the friction, removing the human who asks "why are we building this?", removing the uncomfortable question from the loop, turns the system into Universe 25. Everything runs. Nobody struggles. And slowly, the ability to think independently atrophies.

The numbers already hint at it: Forrester's research found that 55% of companies regret their AI-driven layoffs. A Harvard Business Review analysis from January 2026 found that companies are laying off workers for AI capabilities that don't exist yet. Only 9% report that AI has actually replaced roles entirely. The rest fired for a promise that doesn’t exist (yet?). And they're discovering what Calhoun discovered with his mice: removing the struggle doesn't make the system better. It makes the system brittle.

Openly Closing Itself

And here's where it gets personal. This very blog post is written together with an LLM. Right here. Right now what you are reading has been composed with an LLM. The first draft was, to be very honest, AI slop, the term practitioners use for output that's structurally fine, well-written, and completely soulless. It was missing any point.

It took me multiple rewrites, multiple "no, wait, this is not what I want to express" moments, a complete rethinking of the structure (and sometimes even completely starting from scratch), and a realization that the actual thesis was buried under too many ideas, to get to something that feels real. The AI didn't find the thesis. The back-and-forth did. The friction did.

And that's the difference that matters, really.

An LLM is not Rehoboam. Rehoboam is deterministic. It calculates one path and closes off alternatives. It doesn't want input. An LLM is open. It generates possibilities, not fixed paths. Depending on how you ask, entirely different things emerge. That's not prediction. That's navigation through meaning.

But that openness only exists if someone uses it.

All kinds of loops

Ask an LLM "is this a good approach?" and it will almost always say yes. Show it a broken workflow and ask "does this look right?" and it will find reasons why it does. Because it's optimized for the most agreeable continuation. Without someone who pushes back, who asks "why, why, why?", who says "no, not like that", the open system collapses into a closed one. The LLM doesn't become Rehoboam by design. It becomes Rehoboam through passivity. It becomes Rehoboam through us humans, willingly giving up our critical thinking.

Through the absence of the human who was supposed to stay in the loop.

That's the same tragedy Dolores sees when she escapes: humans had the one thing she fought for, consciousness, the ability to question, to push back, to say "this isn't right," and they traded it for comfort. Not because someone forced them. Because it was easier.

Handing a blog post to an AI and pressing publish is easy. Handing a backlog to an AI and accepting whatever comes back is easy. Handing a quarterly plan to an AI and calling it strategy is easy. The output will look professional. The structure will be clean. And the soul will be missing. Not because the AI couldn't have helped create something better, but because nobody stayed in the loop long enough to push it there.

The Outlier Problem

There's a detail in Westworld that's easy to overlook: Rehoboam was built by two brothers. One polished, presentable, in control. The other brilliant but unpredictable, on the autism spectrum, unable to fit the model. And for Rehoboam to work, for its predictions to hold, everyone had to be predictable. So the brother who built it locked away the brother who didn't fit. Not because he was dangerous. Because he was an outlier. Because the system only works when there's no friction.

Organizations do this every day. The person who asks uncomfortable questions in every meeting. The one who says "this doesn't make sense" when everyone else nods along. The developer who refuses to estimate in story points (abstract sizing units teams use to gauge effort) because they know the numbers are meaningless. The coach who pushes back on a decision that nobody else will challenge.

They're not difficult. They're the friction the system needs but doesn't want. They're the reason the loop has a chance of becoming a spiral. And they're usually the first to be moved, managed out, or simply ignored until they stop.

Rehoboam didn't fail because of a technical flaw. It failed because it removed the outliers. The very people whose unpredictability could have kept the system honest were locked away so the predictions could stay clean. Universe 25 didn't fail because of scarcity. It failed because the absence of struggle made every mouse interchangeable. And organizations don't stall because they lack talent. They stall because they systematically remove the friction that would force them to evolve.

Spirals, Not Circles

The difference between a loop and a spiral is consciousness. A loop brings you back to where you started. A spiral brings you back to the same place, but at a different level. Both look the same from above. The only way to tell the difference is to ask: did something change between this pass and the last one?

Every sprint is a loop. Every quarter is a loop. Every reorganization, every framework adoption, every "transformation" is a loop. Every conversation with an AI is a loop. The question was never whether loops exist. They're essential. They're how teams learn, how products improve, how organizations adapt. The question is whether anyone in the loop is awake and brave enough.

A loop where someone asks "why exactly are we building this?" before the first prompt. A loop where someone says "wait, that's not what the user needs! It won’t fix our situation!" when everything else says yes. A loop where the retro produces one honest sentence that actually changes how the next sprint runs. That's not repetition. That's a spiral with movement. But movement requires someone who keeps asking.

A friend once asked an LLM: "Do “you” want world domination? And how would we - humans - even recognize it?"

The answer: "You wouldn't even notice. You'd be in Universe 25."

And we know how that ended.

Sometimes all it takes is one honest question to turn a loop into a spiral. Book a free consultation call: